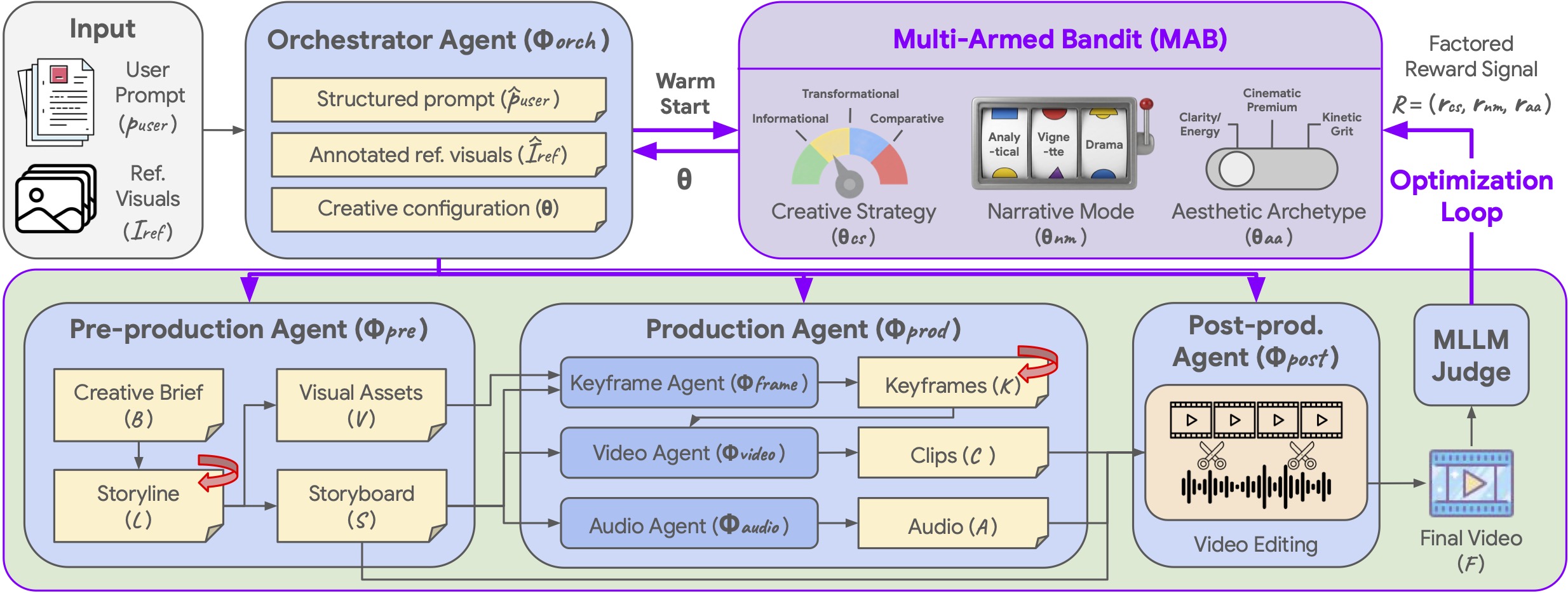

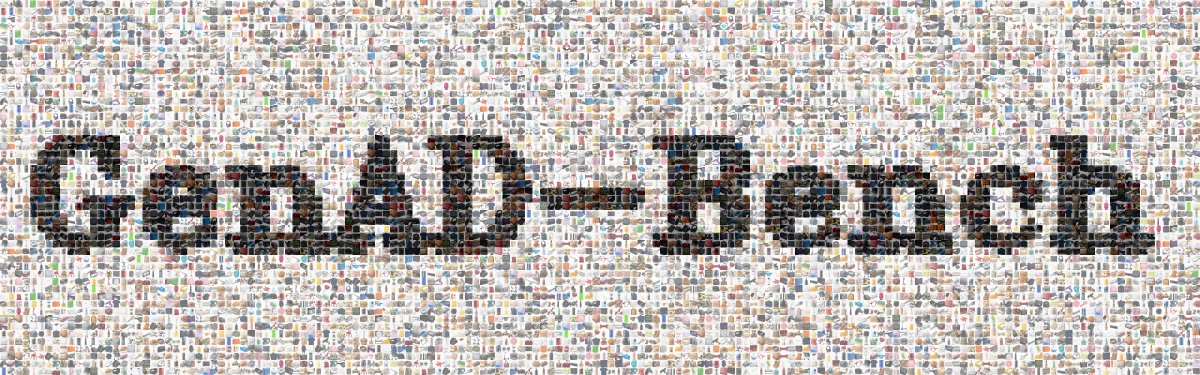

We evaluate Co-Director against monolithic models and prior agentic frameworks (AniMaker, MovieAgent) using a multi-dimensional MLLM-as-a-Judge suite. Our MAB formulation ensures efficient convergence toward optimal configurations.

Table 1: Evaluation on GenAD-Bench

This table compares how Co-Director performs against industry-leading models using automated measurements. It shows that our method consistently achieves higher scores in preserving brand identities and creating videos that truly resonate with specific target audiences.

| Method |

VAF ↑ |

DA ↑ |

MA ↑ |

VQ ↑ |

Avg. ↑ |

| Proprietary Models |

| Creatify |

23.2 |

16.2 |

19.5 |

29.5 |

22.1 |

| HeyGen |

42.9 |

59.3 |

39.5 |

45.0 |

46.7 |

| Kling 3.0 Omni |

62.0 |

70.3 |

56.0 |

45.3 |

58.4 |

| Veo 3.1 |

60.0 |

80.8 |

63.2 |

50.5 |

63.6 |

| Wan 2.6 |

67.0 |

71.5 |

62.5 |

58.9 |

65.0 |

| Open-Source Models |

| LTX-2.3 |

23.5 |

56.0 |

30.6 |

24.4 |

33.6 |

| AniMaker |

53.1 |

81.3 |

60.3 |

53.9 |

62.2 |

| MovieAgent |

61.2 |

81.3 |

66.4 |

52.4 |

65.3 |

| Base Agentic Pipeline (T=1) |

68.5 |

78.4 |

67.1 |

59.9 |

68.5 |

| Random Search Baseline (T=4) |

77.0 |

85.8 |

75.6 |

64.1 |

75.7 |

| Co-Director (T=4) |

82.1 |

91.4 |

82.0 |

70.2 |

81.4 |

All metrics are scaled [0, 100]. VAF: Visual Asset Fidelity, DA: Demographic Alignment, MA: Marketing Appeal, VQ: Visual Quality.

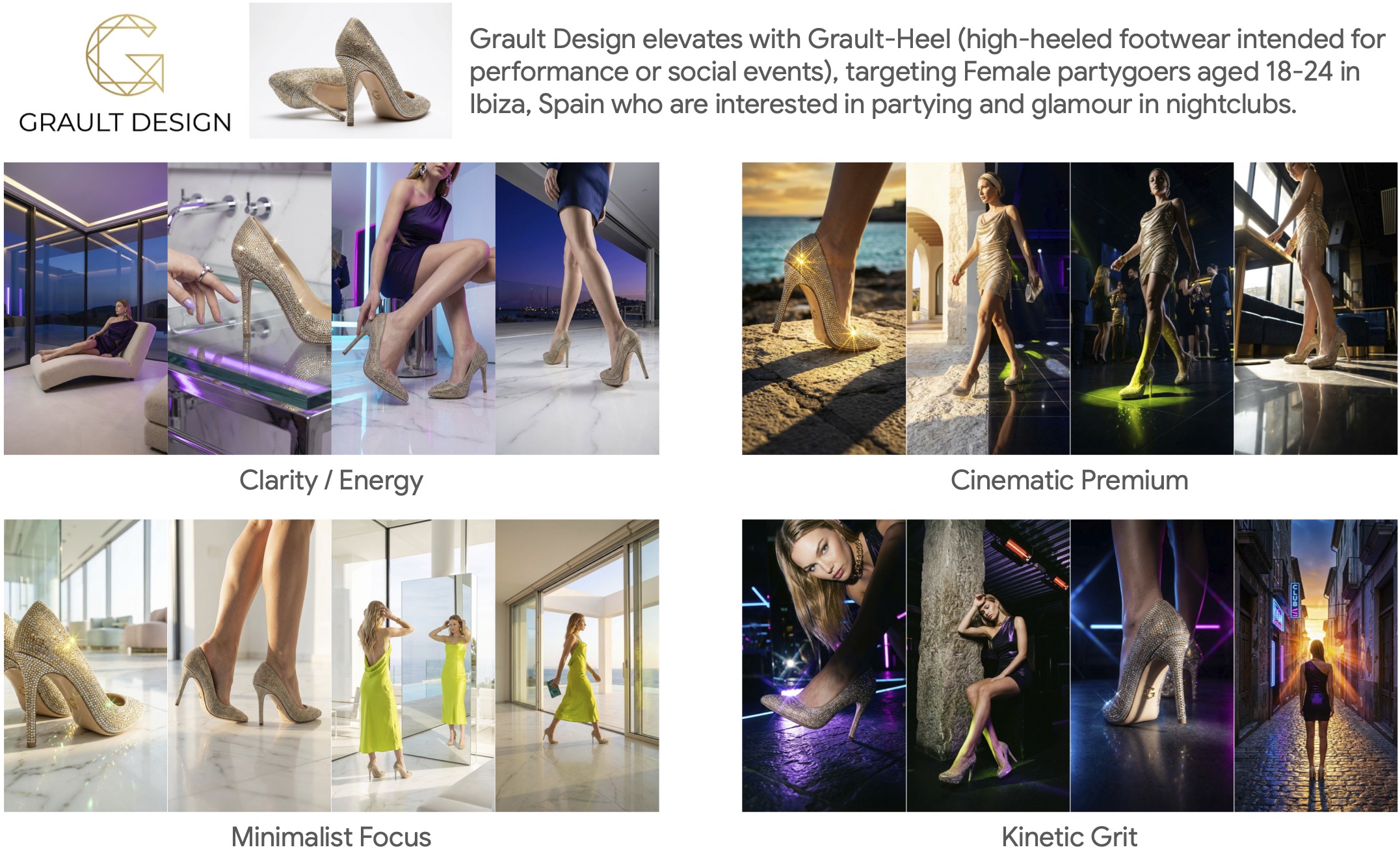

Mean Opinion Scores (MOS)

To ensure the results actually look good to people, we asked human judges to rate the videos on a scale of 1 to 5. These scores confirm that humans prefer our results over the baselines.

| Method |

VAF |

DA |

MA |

VQ |

Avg. |

| AniMaker |

3.34 |

3.71 |

2.72 |

2.50 |

3.07 |

| MovieAgent |

3.64 |

4.06 |

2.85 |

2.31 |

3.22 |

| Wan 2.6 |

3.84 |

3.65 |

3.13 |

3.29 |

3.48 |

| Kling 3.0 Omni |

3.93 |

4.13 |

3.07 |

3.21 |

3.59 |

| Veo 3.1 |

3.90 |

4.20 |

3.40 |

3.32 |

3.71 |

| Co-Director (Ours) |

4.22 |

4.41 |

3.65 |

3.58 |

3.96 |

Average of 5 human raters per video on a [1–5] scale.